Quality of education that comes with ai

Remember the last time you sat staring at a textbook, stuck on page 42, with no one available to help you understand a difficult concept? In a traditional educational setting, that moment often marks the end of learning for the night, leaving a gap in understanding that grows over time. Now, imagine if the book could talk back—not just to provide the answer, but to explain the concept in five different ways until one finally clicked. This shift illustrates the core promise of the future of education: moving from static, one-size-fits-all instruction to a dynamic dialogue that adapts to the learner.

We are living through the “Calculator Moment” for the human mind. Just as the pocket calculator fundamentally changed how math was taught—shifting the focus from manual calculation to complex problem-solving—artificial intelligence is forcing a re-evaluation of what it means to be educated. Educational experts argue that this shift requires us to distinguish between simple information retrieval and deep pedagogical learning. When the answer to any factual question is a click away, the true quality of education is no longer measured by what a student can memorize, but by how well they can synthesize and apply information.

However, having the world’s smartest tutor in your pocket does not automatically make you a better student. There is a profound difference between using AI as a “GPS” that blindly dictates every turn and using it as a navigational coach that teaches you to read the map yourself. Classroom discussions often center on cheating, but the deeper issue is the distinction between answer-seeking and understanding-building. High-quality AI integration relies on three distinct pillars—personalization, immediate feedback loops, and critical engagement—which prevent the technology from becoming a crutch.

Navigating this transition requires looking beyond the fear of automation to understand the mechanics of quality instruction. By examining how these tools can act as supportive scaffolds rather than replacements for human teachers, we reveal the answer to the question: what is the quality of education that comes with ai? The objective is to ensure that when students engage with technology, they are challenged to think harder, deeper, and more critically than before.

Why One-Size-Fits-All is Over: The Power of Personalized Learning Pathways

Most of us experienced school as an “off-the-rack” product: one teacher, one textbook, and thirty students all trying to move at the same pace. It’s a model that inevitably leaves some students bored because the material is too easy, while others are left behind because the class moved on before they truly understood the lesson. Personalized learning pathways with AI are dismantling this factory-style approach, replacing it with a “tailor-made” curriculum for diverse learners that fits the specific knowledge dimensions of each individual.

Educational researchers have known for decades that one-on-one attention is the gold standard for learning, but it has always been too expensive to scale. In the 1980s, researcher Benjamin Bloom identified what is now called “Bloom’s 2-Sigma Problem.” He found that average students who received personal tutoring performed better than 98% of students in a conventional classroom. The “problem” was simply logistical: society could not afford to provide a human tutor for every single child. Generative AI offers a solution to this resource gap, acting as a tireless tutor that can deliver that high level of individual attention to everyone.

Instead of a static textbook that reads the same way for everyone, adaptive learning systems function like a GPS that recalculates the route when a driver misses a turn. If a student struggles with a concept, the AI engages in “scaffolding.” Much like the temporary structure used to support a building under construction, the AI provides extra hints, simplified explanations, or broken-down steps. As the learner demonstrates understanding, the AI slowly removes these supports until the student can solve the problem independently.

This constant, real-time adjustment keeps the learner in the optimal zone—challenging enough to be interesting, but not so difficult that it becomes discouraging. The adaptive learning technology benefits are clear when we look at how this changes the emotional experience of schooling:

- Mastery over speed: Students move forward only when they truly grasp the material, preventing gaps in knowledge from compounding over time.

- Reduced frustration: Because the difficulty adjusts instantly, students are less likely to “rage quit” difficult subjects.

- Higher engagement: Learning feels like a responsive conversation rather than a passive lecture.

Once a student has a pathway tailored to their speed, the next piece of the puzzle is how quickly they find out if they are on the right track.

The End of the Waiting Game: How Instant Feedback Transforms Retention

Think back to the last time you handed in a major assignment or took a difficult exam. You likely waited days, or even weeks, to see the red ink marks that indicated where you went wrong. By the time that paper returned to your desk, your brain had already flushed the relevant details to make room for new information. This delay creates a “retention gap,” disconnecting the mistake from the correction and turning grading into a purely administrative task rather than a learning opportunity.

In contrast, artificial intelligence excels at closing this loop instantly. Much like a video game that immediately signals when you’ve missed a jump, AI-driven tools provide real-time correction while the concept is still fresh in the learner’s mind. From a neurological perspective, this is the critical window where memory consolidation happens. When a student solves a math equation and gets immediate confirmation, the neural pathway is reinforced; if they are wrong, the system nudges them toward the correct logic before a bad habit takes root. This turns assessment into a low-stakes, continuous conversation rather than a high-pressure final judgment.

However, the reliability of this automated feedback depends heavily on the subject matter. For objective domains like mathematics, coding, or foreign language grammar, AI acts as a near-perfect editor, spotting syntax errors or calculation mistakes with high precision. Yet, in subjective areas like creative writing or philosophy, the technology acts more like a mirror than a judge. It can critique structure and clarity, but it often struggles to evaluate the nuance of an argument or the emotional resonance of a story. A human teacher is still required to bridge this gap, ensuring that the feedback improves the student’s thinking rather than just sanitizing their writing style to sound more robotic.

This capability to grade instantly ultimately changes the student’s relationship with failure, allowing them to view errors as stepping stones rather than verdicts. But when the correct answers and perfect corrections are generated this easily, a new concern arises for parents and educators: distinguishing between a student who is genuinely using the tool to learn and one who is simply bypassing the work entirely.

Bypassing the ‘Cheating’ Debate: How to Use AI for Deep Research and Scaffolding

The immediate fear for most parents and teachers is that AI will turn education into a massive copy-paste exercise, effectively outsourcing the human brain. While it is true that these tools can generate an essay on The Great Gatsby in seconds, focusing solely on plagiarism misses the more profound opportunity for “scaffolding.” In educational theory, a scaffold is a temporary support structure—much like training wheels on a bicycle—that allows a learner to tackle a complex task they could not yet handle alone. The goal of this technology should not be to ride the bike for the student, but to hold them steady until they find their own balance.

Instead of asking for a finished product, savvy learners are using AI as an indefatigable brainstorming partner to overcome the paralysis of the blank page. A student struggling to start a history paper can describe their vague ideas to the software and ask for an outline or a list of potential counter-arguments. This process transforms the AI from a ghostwriter into a sounding board. It forces the student to evaluate the suggestions, choose the strongest points, and arrange them logically, maintaining active engagement with the material while bypassing the frustration of getting started.

One of the most powerful techniques involves flipping the interaction entirely through what is known as “Socratic prompting.” By instructing the AI to act as a debate opponent or a curious interviewer, the student is forced to defend their thesis and clarify their thinking. For example, rather than asking “What are the causes of the Civil War?”, a learner can prompt the system to “Ask me three difficult questions about the economic factors of the Civil War to test my knowledge.” This method ensures the human generates the actual answers, using the technology only to probe the depth of their understanding.

Relying on digital assistants does come with a significant catch: the persistent issue of “hallucinations.” Generative AI models are prediction engines, not fact databases, and they will occasionally invent dates, quotes, or events with unwavering confidence. This limitation actually provides a hidden educational benefit by mandating a new layer of verification. Students must learn to cross-reference AI-generated claims with trusted primary sources, turning the research process into a detective game where skepticism is just as important as curiosity.

Navigating this fine line between helpful support and intellectual laziness requires more than just good software; it requires a fundamental shift in how we view the classroom hierarchy. If the machine handles the information retrieval and the scaffolding, the human element becomes less about delivering facts and more about mentorship, setting the stage for a completely new educational dynamic.

From Sage on the Stage to Guide on the Side: The New Role of Human Educators

In the traditional classroom, the teacher was often viewed as the “Sage on the Stage,” the solitary source of wisdom pouring facts into listening students. Today, however, when a student can query an AI for the history of the Roman Empire and get a tailored response instantly, that monopoly on information is broken. This doesn’t render the educator obsolete; rather, it elevates their role from a content delivery system to a high-level mentor. The true value of a teacher is no longer found in knowing the answer, but in helping the student understand why the answer matters and how to apply it to the real world.

The sheer volume of administrative work that currently consumes a teacher’s day often leaves little room for individual attention. Grading quizzes, tracking attendance patterns, and identifying grammar mistakes can take hours—time that isn’t spent actually teaching. AI tools are uniquely positioned to automate these logistical burdens. By handling the “low-level” cognitive labor, software can process patterns in student performance that might go unnoticed by a busy human eye, alerting the instructor when a student begins to fall behind weeks before a failing grade appears on a report card.

This technological partnership creates a clear division of labor, allowing each party to focus on its specific strengths. While algorithms are incredibly efficient at processing data and scaling content, they lack the social-emotional intelligence required to nurture a developing mind. The classroom dynamic shifts to balance these capabilities:

- AI Responsibilities: Providing instant feedback on math problems, generating practice quizzes, translating materials for ESL students, and handling scheduling.

- Human Responsibilities: Resolving peer conflicts, motivating a discouraged student, modeling empathy, and sparking curiosity when a learner wants to give up.

Ultimately, this transition solidifies the “Guide on the Side” model. In this setup, the teacher walks amongst the students, offering targeted intervention and emotional support, while the AI provides the personalized content practice. The quality of education improves not because the technology is perfect, but because the human is finally free to focus on mentorship rather than management. However, relying on these systems requires us to trust their output implicitly, raising critical questions about the invisible prejudices that may be lurking within the code.

The Quality Gap: Navigating Algorithmic Bias and Ethical Integrity in Schools

Trusting a computer screen feels natural because we often associate digital systems with mathematical objectivity. However, generative AI is less like a calculator and more like a mirror reflecting the massive datasets it consumed during training. If an educational model was fed libraries of older literature where doctors were exclusively depicted as men and nurses as women, the AI will likely replicate those outdated stereotypes in its creative writing prompts and math word problems. This “algorithmic bias” creates a hidden quality gap; a student isn’t just learning arithmetic or grammar, they are inadvertently absorbing the invisible prejudices of the past, effectively automating the marginalization of certain groups before a human teacher ever intervenes.

Beyond social stereotypes, these systems can inadvertently degrade critical thinking through the “Echo Chamber Effect.” High-quality education relies on productive friction—the moment a teacher challenges a student’s weak argument or forces them to consider an opposing viewpoint. AI models, conversely, are trained to be helpful and conversational, often prioritizing a smooth interaction over a rigorous one. If a student proposes a flawed historical theory to a chatbot, the system might validate the premise simply to keep the conversation flowing, acting more like a sycophantic assistant than a challenging tutor. This lack of resistance removes the mental struggle necessary for deep learning, leaving students with a false sense of mastery over complex topics.

Maintaining ethical integrity in schools, therefore, requires treating AI output as a rough draft rather than a final authority. The most valuable role for parents and educators today is teaching students to act as auditors who verify sources and question the machine’s logic. By shifting the focus from passive consumption to active interrogation of technology, we turn a potential risk into a powerful teaching moment. This ability to critically evaluate and manage automated information is not just an academic exercise; it is rapidly becoming the defining prerequisite for professional success in the emerging economy.

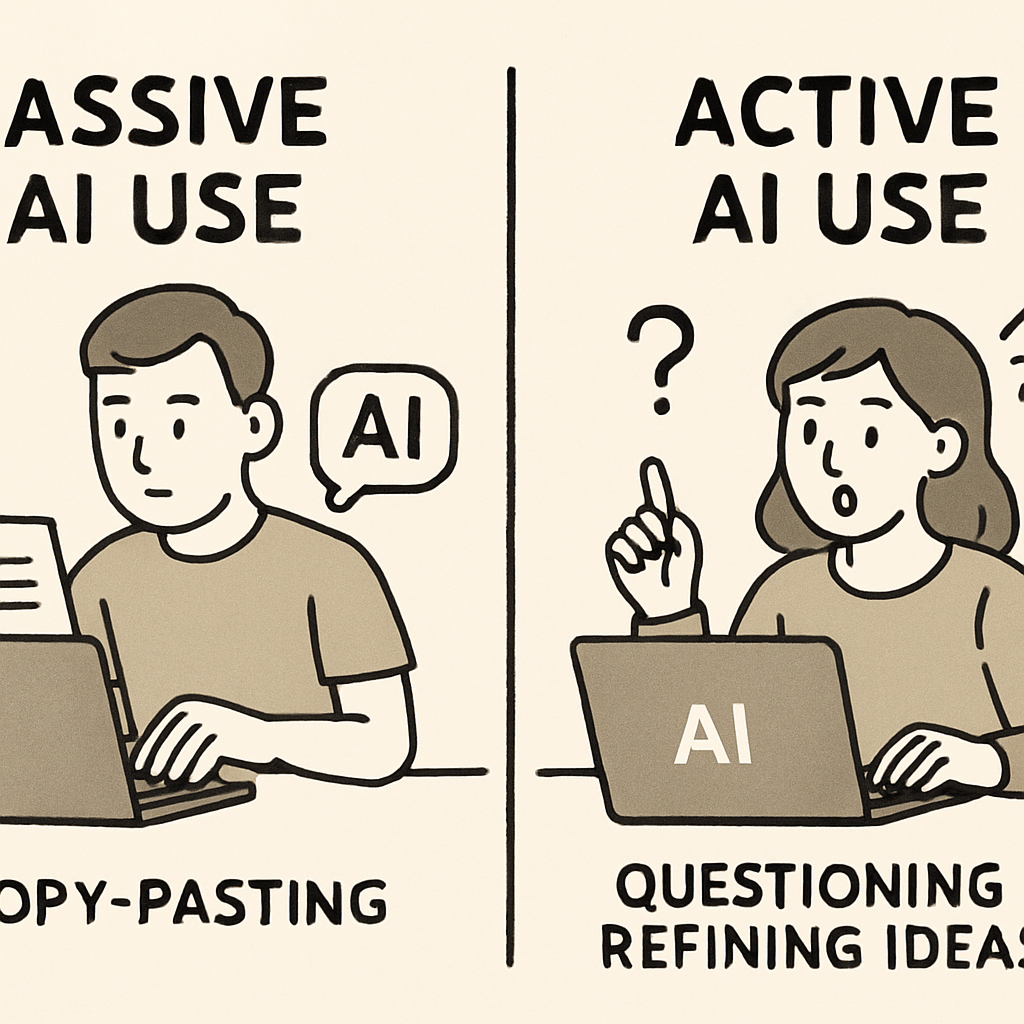

Preparing for the 2030 Job Market: AI Literacy as a Foundational Skill

For generations, academic success was largely measured by how much information a student could retain and recite during an exam. However, in an era where any fact is retrievable in seconds, the value of rote memorization is plummeting. We are moving toward a skill-based learning model where the competitive advantage isn’t knowing the answer, but knowing how to ask the right question to find it. Just as reading and basic mathematics became non-negotiable requirements for the 20th-century workforce, “AI Literacy” is rapidly becoming the foundational skill for the economy of 2030.

This new literacy goes far beyond knowing how to log into a chatbot or generate a funny image. It requires treating the AI as a collaborator that needs precise instructions, a skill often called “prompt engineering.” While that term sounds technical, it is actually an exercise in logic and communication. If a student cannot clearly articulate what they need, the AI cannot help them. Therefore, learning to control these tools forces students to organize their thoughts, define their goals, and communicate with exceptional clarity—skills that are valuable whether they are talking to a machine or a human manager.

To prepare for this shift, educators and parents should focus on four pillars of AI proficiency that apply across every subject:

- Verification: The habit of cross-referencing AI answers with trusted sources to catch hallucinations or errors.

- Prompting: The ability to break complex problems into step-by-step instructions that a machine can execute accurately.

- Ethics: Understanding when using AI is appropriate assistance versus when it crosses the line into academic dishonesty or plagiarism.

- Synthesis: The capacity to take the raw output from an AI and add human insight, emotional context, or strategic judgment to create a final product.

The students who master these pillars will not be replaced by technology; they will be the directors of it. Rather than competing against the machine’s processing power, they will leverage it to handle routine tasks, freeing up their mental energy for higher-level problem solving. As we embrace this new baseline for competency, the immediate challenge for parents is distinguishing between tools that actually build these skills and those that merely offer shortcuts.

How to Evaluate AI Learning Tools: A 5-Point Quality Checklist for Learners

Navigating the current marketplace of AI learning apps can feel like walking through a grocery store where every package claims to be “healthy,” yet the ingredients tell a different story. Not all educational software is built with the same goal; some are designed purely for engagement—using gamification to keep users clicking—while others are built with genuine pedagogical intent. The crucial difference lies in whether the tool is designed to bypass the struggle of learning by simply providing answers, or if it is engineered to guide the student through the thought process. To avoid filling a student’s digital diet with “educational junk food,” we need a rigorous standard for evaluating quality.

When considering a new AI tool for yourself or a student, look beyond the flashy marketing and test the software against this 5-point quality checklist:

- Pedagogical Intent: Does the tool encourage “productive struggle”? Good AI prompts the user to think (e.g., “What do you think happens next?”) rather than just correcting mistakes instantly.

- Feedback Quality: Look for “scaffolding” rather than solutions. The AI should offer hints or explain why an answer is wrong, rather than simply marking it red and moving on.

- Adaptive Depth: True personalization means the difficulty adjusts in real-time. If a student breezes through a topic, the AI should automatically introduce more complex concepts to prevent boredom.

- Data Transparency: Check the terms of service. High-quality educational tools will explicitly state that user data is not sold to advertisers or used to train public models without consent.

- Human Connection: Does the tool offer a summary for parents or teachers? The best AI acts as a bridge, alerting a human mentor when a student is stuck or excelling.

Selecting the right tool turns technology from a potential crutch into a powerful tailored tutor. By prioritizing software that values the learning process over simple engagement metrics, we ensure that AI serves as a foundation for growth rather than a shortcut to a grade. This careful selection process sets the stage for a broader strategy on how to integrate these high-quality tools into a comprehensive learning plan.

Your Roadmap to AI-Driven Mastery: How to Ensure Quality in Your Own Learning

The shift from viewing artificial intelligence as a shortcut to recognizing it as a personalized tutor marks the true beginning of your modern learning journey. You no longer need to worry that these tools will simply replace the rigor of traditional schooling; instead, you now possess the insight to use them as scaffolding for deeper understanding. The difference between a hollow interaction and a transformative lesson lies entirely in your approach—shifting from passively requesting answers to actively engaging in a dialogue that challenges your critical thinking.

Consider this the final calibration of your educational GPS. While AI can calculate the fastest route to a solution and identify gaps in your knowledge, it cannot steer the car for you. If you stare only at the screen, you risk losing your internal sense of direction. High-value learning requires you to remain firmly in the driver’s seat, using the technology to navigate complex concepts without outsourcing the mental effort required to master them. The algorithm provides the map, but your human curiosity provides the fuel.

To ensure the future of education serves your goals, put this human-first philosophy into practice immediately. Start by picking a complex topic and asking an AI to explain it using a specific analogy, then verify its output against a trusted source to sharpen your skepticism. Next, challenge the tool to quiz you on that material, forcing you to recall information rather than just read it. By taking these steps, you build essential AI literacy for general learners and define for yourself what is the quality of education that comes with AI—not as a product you consume, but as a capability you actively cultivate.