The Fast Rise of AI Policy in School Districts

Last Tuesday, a tenth-grade English teacher in Ohio sat down to grade thirty essays on The Great Gatsby, only to realize they all sounded eerily similar—and a little too perfect. Every comma sat right where it belonged, and the vocabulary reached well beyond a typical sophomore’s grasp. Traditional plagiarism checkers found absolutely nothing wrong, exposing exactly why current cheating rules have become entirely obsolete in modern classrooms.

Usually, school board policies take months of committees and public hearings to change. Today, administrators are attempting a frantic 48-hour policy shift to catch up with a technology that simply did not exist in the public sphere when the previous school year ended. Watching how school districts are crafting AI policy on the fly reveals a massive clash between the slow gears of civic bureaucracy and the lightning-fast adoption of new digital tools by tech-savvy teenagers.

Generative AI functions primarily as a predictive tool, acting much like a super-powered version of the auto-complete feature on your smartphone. Rather than copying and pasting existing articles from a search engine, these programs guess the next most likely word to write based on millions of books and websites they have already read.

Because the software creates completely original sentences, educators find themselves locked in a fierce debate over banning the technology versus embracing it. Parents sitting around the kitchen table are asking a fundamental question about the future of AI in education: if your child uses a computer program to brainstorm an outline for a history paper, is that a valuable modern skill or an unfair digital shortcut? According to recent surveys of district leaders, navigating this “cheating versus tool” dilemma remains their biggest immediate hurdle.

Handing out outright bans rarely works, much like trying to outlaw calculators in the nineteen-eighties or Wikipedia in the early two-thousands. Since the old rules failed to stop students from accessing these powerful new resources, administrators must now decide what it actually means to learn and demonstrate knowledge. The behind-the-scenes work happening in your local schools right now will ultimately determine how we protect student data while preparing them for a rapidly changing digital world.

Why Last Year’s Tech Rules Don’t Work for This Year’s Homework

For years, keeping kids on task online meant a simple game of digital whack-a-mole. School internet filters acted like strict librarians, blocking access to known distractions based on a preset list of web addresses. If a student tried visiting a banned site, a red stop-sign popped up. But that strategy relies on a technology landscape that vanished the moment ChatGPT launched.

The core problem is that yesterday’s rules were built for finding information, not creating it. Traditional search engines act like a library catalog, pointing students toward existing articles they still have to read and synthesize. Generative AI functions as a predictive tool that acts more like a personal ghostwriter. Instead of pointing to a website about the Civil War, it instantly writes a custom essay by predicting the most logical sequence of words.

Trying to block this new capability is like catching rain with a leaky bucket. Because AI tools are now baked into word processors and basic search bars, administrators can no longer simply ban a single website. This shift is why adapting acceptable use policies for AI requires fundamentally redefining what constitutes independent student work.

Realizing that walls and filters are failing, administrators are heading back to the drawing board. Crafting a modern school district AI policy means shifting the focus from blocking software to teaching responsible behavior. Because adults often struggle to understand these tools as quickly as teenagers, forward-thinking schools are trying a completely different, unexpected strategy to draft these rules.

When Students Lead the Way: The Real Story of Districts Asking Kids to Write the Policy

Usually, school board meetings feature a predictable cast of adults making decisions for children. But when administrators in one midwestern town realized teenagers understood generative AI better than the IT department, they flipped the script. Rather than guessing how teenagers were using these predictive tools, this school district asked students to draft its AI policy.

This shift represents a growing trend known as collaborative AI governance for school boards. Instead of treating policy-making as a closed-door legal exercise, administrators are inviting teachers, parents, and—most importantly—students to co-write the rulebook. It is a practical admission that when technology evolves weekly, the people actively using it in the classroom are the most valuable guides.

Bringing teenagers to the drafting table yields surprisingly mature insights. When students help design the boundaries, districts experience four distinct advantages:

- Identifying real-world uses, like prompting an AI to explain a tough math concept instead of just asking for the answer.

- Clarifying the fuzzy line between a “helpful tutor” and an “academic shortcut.”

- Spotting technological loopholes that adults simply would not think to look for.

- Fostering a culture of transparency rather than a game of digital hide-and-seek.

When kids feel ownership over a set of rules, compliance naturally increases and the urge to cheat decreases. They aren’t just following arbitrary orders from an out-of-touch administration; they are upholding a community standard they actually helped build.

However, even the most ethically sound, student-led guidelines cannot tackle the legal risks lurking beneath the surface. While teenagers can perfectly define fair use for homework, adults must still step in to shield them from unseen corporate data harvesting. That reality forces districts to look beyond the classroom and tackle the serious legal complexities of data privacy.

Protecting the Digital Footprint: Solving the AI Privacy Puzzle Without a Law Degree

Every time a middle schooler asks a public chatbot to help brainstorm a history project, they leave behind an invisible trail. This digital footprint goes beyond simple web history, capturing their original thoughts, writing styles, and personal details. While parents mostly worry about cheating, the quieter threat is what happens to that information once the browser tab closes.

For years, schools have relied on a secure “walled garden” approach to educational software. Inside this closed system, districts tightly control who sees student grades and assignments. However, consumer-grade generative AI shatters those walls because these platforms absorb outside information. When a teenager pastes an essay into a public AI, that writing leaves the district’s garden and enters a commercial database permanently.

That data transfer creates a massive problem because tech companies routinely use user inputs to train future systems. If a student feeds a chatbot a personal narrative about a family struggle, the machine learns from that deeply personal story. Administrators realize that without strict boundaries, their students are unknowingly serving as free, unconsenting data providers for billion-dollar corporations.

Stopping this drain requires schools to find platforms that respect long-standing privacy laws. Administrators are urgently addressing FERPA compliance for AI tools by seeking enterprise versions that guarantee student data will never be saved or used for model training. The process of evaluating classroom AI tool safety now means ensuring a digital program acts as a private tutor rather than a corporate data miner.

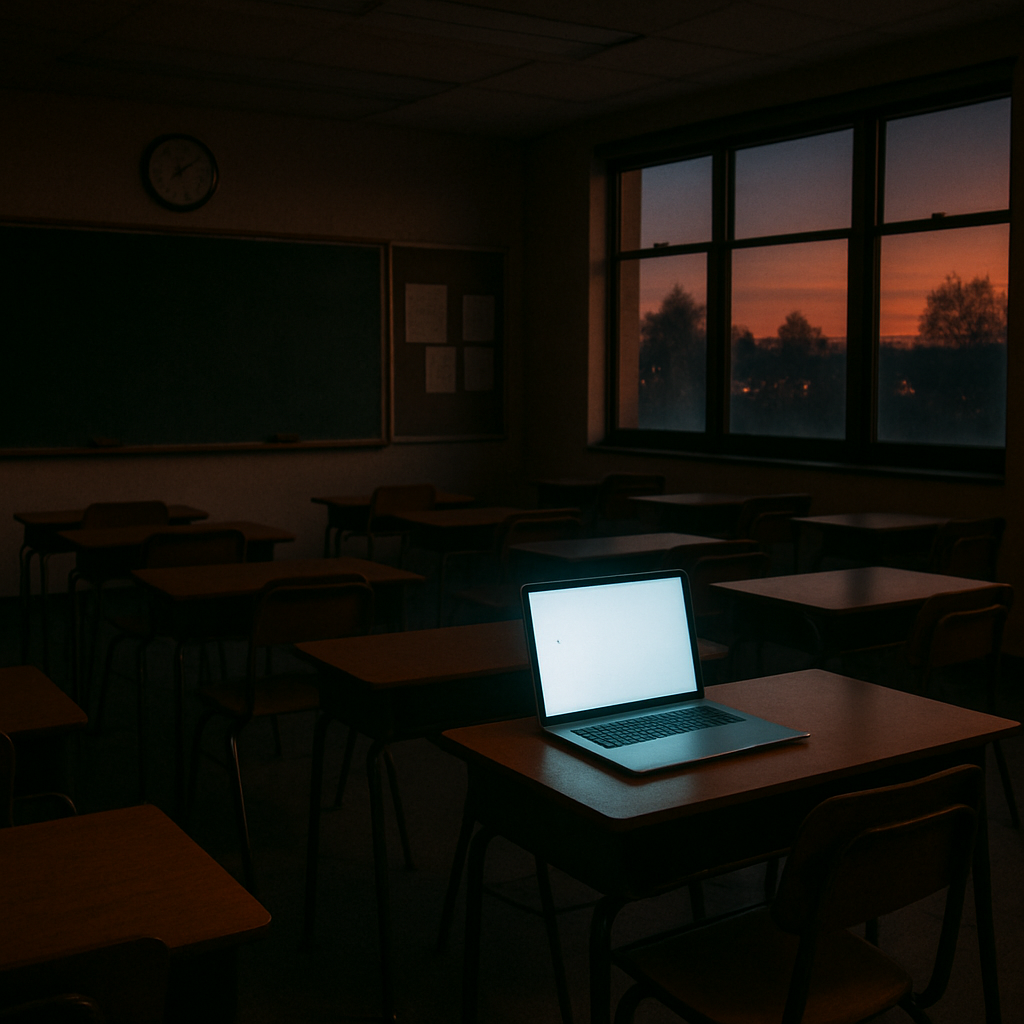

Affording these highly secure platforms creates a brand-new challenge for school boards operating on tight budgets. While wealthy districts can purchase protected licenses, underfunded schools might inadvertently push students toward free, data-hungry alternatives. Solving this disparity highlights why modern educational equity requires much more than simply distributing basic hardware.

Closing the New Digital Divide: Why AI Equity is More Than Just Giving Out Laptops

Ten years ago, the original “digital divide” meant figuring out which students had laptops and high-speed internet at home. Today, school boards face an invisible new hurdle known as the intelligence gap. Even if every student uses identical school-issued Chromebooks, wealthy families can easily purchase highly advanced generative AI subscriptions, while low-income students must rely on slower, error-prone free versions.

Preventing this uneven playing field requires much more than simply handing out physical hardware. Administrators realize that premium AI access is quickly becoming as essential to modern homework as a reliable Wi-Fi connection. When only some teenagers possess high-powered platforms to brainstorm debate topics or outline complex science projects, it quietly creates a second-class education system right under our noses.

Fortunately, solving AI equity gaps for students is becoming a central mission for proactive school districts. Administrators are actively pooling their budgets to purchase secure, top-tier software licenses that grant every child equal access to the same powerful predictive tools. Leveling this digital playing field ensures that a family’s income does not determine the quality of a student’s digital tutor.

Providing equal software access, however, is completely useless if the adults in the classroom cannot properly guide its use. School leaders are quickly recognizing that investments in equal access must be paired with comprehensive technological literacy for educators.

From ‘No AI’ to ‘Know AI’: How Teachers are Training for a Skill That Didn’t Exist

Determining whether AI brainstorming constitutes a valuable modern skill or a digital shortcut has forced a massive pivot in education. Last fall, most school boards viewed it as the latter, scrambling to ban these tools completely. Now, administrators realize that avoiding the technology is impossible, prompting a shift toward “AI Literacy”—teaching educators how to safely navigate tools that didn’t exist when they earned their teaching degrees.

Equipping classrooms for this shift requires extensive professional development. Before educators can guide students, districts are actively training staff on generative AI risks, such as data privacy vulnerabilities and algorithmic bias, where the software accidentally reflects human prejudices found on the internet. This foundational knowledge allows schools to establish clear guidelines for ethical AI use, ensuring a student’s digital footprint remains completely secure while they explore these tools.

The biggest hurdle for these newly trained teachers involves completely redesigning how they measure student success. Instead of relying on traditional “gotcha” grading that only evaluates a final, potentially AI-generated essay, educators are shifting to “Process-Based Grading.” This method assesses the critical thinking that happens during the assignment rather than just checking the polished output at the very end.

When a classroom embraces this transparent approach, grading typically transforms in three specific ways:

- Draft Checks: Teachers review early outlines and rough ideas alongside the student.

- Verbal Defense: Students must be able to explain the vocabulary and arguments used in their submitted work.

- Prompt Evaluation: Students are graded on how safely and cleverly they instructed the AI to help them, treating the software as a supervised research assistant.

Surprisingly, adapting to this new landscape is also offering exhausted educators a lifeline against burnout. Teachers are discovering they can use these same programs to instantly draft parent newsletters or outline weekly lesson plans, drastically reducing administrative paperwork. Balancing these powerful benefits with student safety requires immediate action, leading many school boards to deploy interim guidance rather than wait for long-term policies.

The ‘Interim Guidance’ Shortcut: How Districts Deploy Policies in Weeks, Not Months

Usually, school board policies require months of committee debates before a single word changes. But when new technology arrives on students’ phones overnight, that timeline guarantees chaos. Administrators realized they couldn’t wait until the semester’s end to decide if using a chatbot for math homework constituted cheating.

To survive this rapid shift, districts are relying on a practical shortcut. Instead of carving permanent rules into stone, crafting interim AI guidance for educators establishes temporary, flexible boundaries. Administrators treat these policies as “living documents,” explicitly designed to evolve month by month as the software upgrades.

This adaptability lets a school district “beta test” its rules inside real classrooms. In a typical draft-test-edit cycle, a principal might authorize an AI tool for outlining essays in October, only to discover privacy issues by November. Because the policy is a living document, leadership can immediately revoke access and adjust the rules without a formal, months-long vote.

When superintendents utilize rapid response AI policy templates, they prioritize immediate safety over long-term philosophy. A strong interim checklist typically defines:

- Approved Applications: Which specific programs are cleared for student use on school Wi-Fi.

- Privacy Boundaries: Strict rules prohibiting the entry of personal information into any AI prompt.

- Transparency: Guidelines detailing how educators must notify parents when AI assists with grading or lesson planning.

Establishing these adaptable frameworks protects privacy while giving educators breathing room to learn. Yet, even with flexible guidelines in place, teachers still face the daily challenge of enforcing them—a task made incredibly frustrating when districts realize their purchased software cannot reliably separate human effort from machine output.

Beyond the Red Pen: Why AI Detectors are Failing and What’s Replacing Them

When ChatGPT first hit classrooms, the immediate administrative reflex was to buy a digital shield. Districts spent thousands on software promising to perfectly scan student essays and flag machine-generated homework. It sounded like the perfect modern equivalent of a teacher’s red pen. Unfortunately, administrators quickly learned that pitting algorithms against each other creates a deeply flawed system.

The biggest flaw in this armor is the heartbreaking reality of “false positives,” which occurs when detection software incorrectly labels an original student essay as AI-generated. Imagine the kitchen-table conversation when an earnest eighth-grader receives a zero and an accusation of cheating simply because their conventional, straightforward writing style triggered a faulty algorithm.

Relying on these unreliable scanners also introduces a dangerous game of cat-and-mouse. Tech-savvy teenagers quickly learn “detection evasion,” deliberately inserting typos or tweaking phrases just to trick the system. Furthermore, uploading assignments into third-party scanners sparks intense debates over AI detection software vs. student privacy. Parents are rightfully asking what happens to their child’s intellectual property once it is swallowed by corporate databases designed to train future algorithms.

Because of these compounding risks, forward-thinking school boards are abandoning “gotcha” software altogether. Instead, they are reimagining how to prevent AI plagiarism in schools by changing the assignment itself. Teachers are returning to old-school methods: brainstorming on paper, writing drafts during class time, or orally defending final projects. Grading the actual learning process naturally neutralizes the temptation to cheat.

Moving away from heavy-handed policing forces districts to address the deeper human element of this technological shift. Rather than treating artificial intelligence purely as an adversary, schools must talk to students about responsible usage and focus on teaching genuine digital citizenship.

The Ethical Compass: Teaching Kids Digital Citizenship in a Bot-Filled World

Once educators realized banning artificial intelligence was impossible, the conversation shifted from policing to parenting. If a tenth-grader uses a chatbot to brainstorm a history paper, they need clear guidelines for ethical AI use in schools rather than outright prohibition. Teaching students to responsibly navigate technology is simply an updated version of traditional media literacy.

The first critical lesson involves showing kids that chatbots are confident liars. Because these tools are essentially advanced auto-complete systems guessing the next logical word, they often invent fake facts out of thin air—a phenomenon developers call “hallucinations.” A student blindly copying an AI-generated biography of Abraham Lincoln might accidentally hand in an essay claiming the president drove a car.

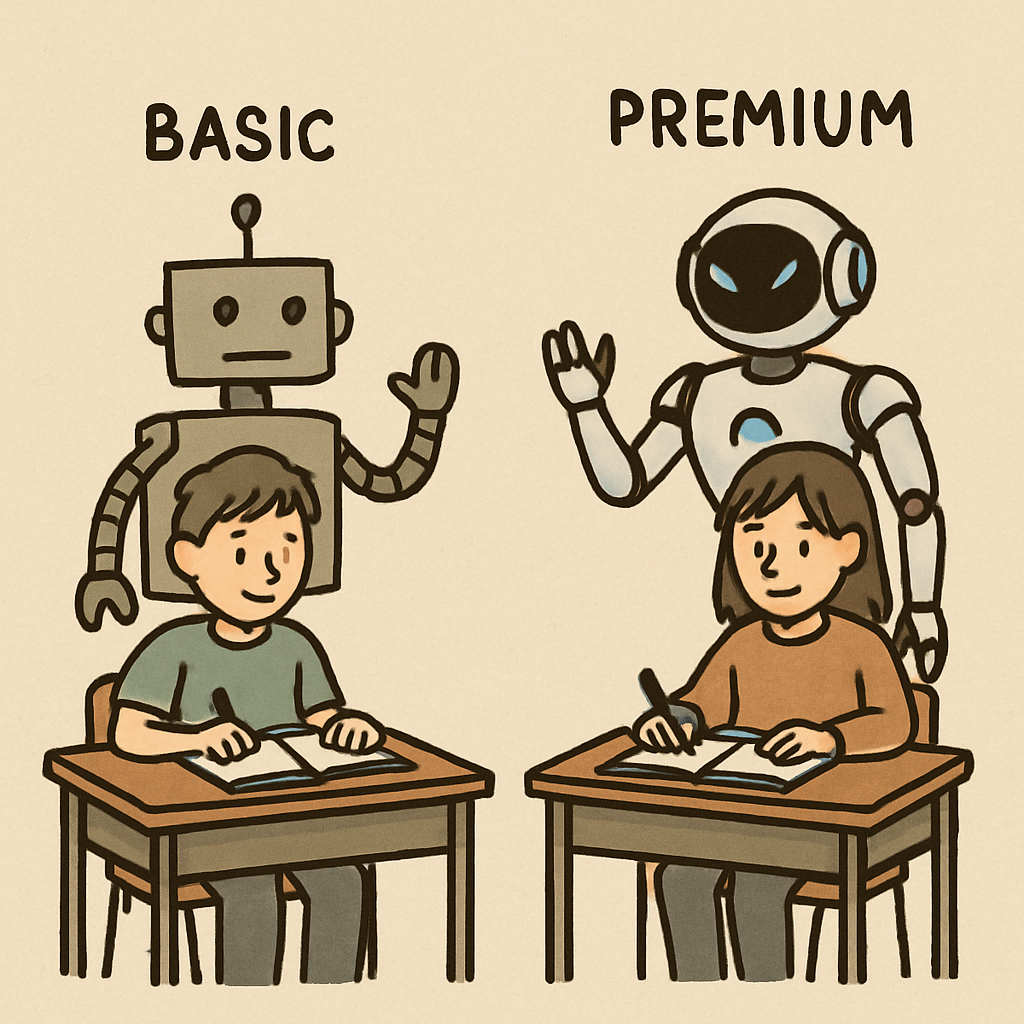

Beyond factual errors, students must understand algorithmic bias, which acts as a “reflecting mirror” for society’s flaws. Artificial intelligence learns by reading the open internet, absorbing both our greatest knowledge and our worst prejudices. If a child asks a bot to write a story about a CEO and a kindergarten teacher, the machine might automatically default to outdated gender stereotypes simply because of what it read online most often.

Countering these invisible prejudices requires adopting a “human-in-the-loop” writing model. This approach teaches teenagers to treat technology as a flawed assistant rather than an unquestionable expert. Real digital citizenship in the age of AI means the student remains the ultimate editor, independently fact-checking every claim and ensuring their original voice shines through the final draft.

Community involvement is absolutely essential to making this ethical compass a reality. As administrators rewrite homework policies to support these new critical thinking models, families must take an active role in shaping how these tools are integrated into local education.

Your Seat at the Table: Five Questions Every Parent Should Ask Their School Board

While the headlines focus on robots doing homework, the real story is how educators are actively protecting data, navigating legal risks, and rethinking learning. Because the stakes are high, parents and community members can help their districts answer complex questions about acceptable use and integration.

The best first step is to carry this conversation directly into the classroom. Talk to your child’s teachers about their personal approach to these predictive tools. Ask where they see generative AI helping students thrive and where they feel it hinders critical thinking. This creates a collaborative roadmap that supports educators rather than second-guessing them.

Beyond the classroom, evaluate your local leadership. Transparency is the ultimate accountability metric for evaluating new administrative guidelines. You deserve to know if your school board is following a logical implementation guide or simply hoping for the best while students outpace the rules.

To get involved in the future of learning, bring this actionable checklist of five questions to your next school board meeting:

- What is the exact timeline for publishing a public-facing AI roadmap?

- How are we protecting our students’ digital footprints from outside tech vendors?

- What professional training is being provided to help our teachers adapt?

- How is the district addressing algorithmic bias in new learning platforms?

- When will parents and community members be invited to join an AI policy committee?

Asking these questions ensures districts meet their fundamental responsibility to future-proof our students. Schools cannot simply ban their way out of this technological shift. They must actively teach students to navigate these tools ethically and safely for the modern workforce.

Starting with a simple teacher conversation can clarify how your school communicates its vision. The technology may be entirely new, but the core human values of curiosity, integrity, and foundational learning remain exactly the same. You now have the knowledge to ensure your district keeps those values at the very center of education.