Remember the familiar anxiety of staring at a blank sheet of paper, waiting for a tenth-grade history essay to write itself? For decades, our best digital solution was typing a question into a search engine and hoping a preexisting webpage held the exact answer. Today, technology has shifted from merely hunting down information to actually creating it from scratch.

Contrary to popular belief, modern artificial intelligence isn’t a giant encyclopedia or a glorified cheating machine. The technology powering these tools—known as a Large Language Model (LLM)—functions more like the predictive texting on your smartphone, just scaled up to an incredible degree. It guesses the next most logical word based on patterns it learned from reading vast amounts of human writing.

Generative AI in the classroom transforms this predictive power into an interactive conversation partner. Parents often wonder about evaluating ChatGPT vs Claude for educational purposes, but both fundamentally serve as creative sounding boards that help students organize thoughts rather than bypassing the work.

According to educational non-profits like Khan Academy, this shift unlocks dynamic new ways to learn. Interacting with responsive AI drives three primary benefits for student engagement: instant personalized feedback, lower frustration during complex tasks, and a judgment-free space to ask infinite questions.

Meet Your Very Fast Intern: What Really Happens When You Type a Prompt

Typing a simple question into a chat box often yields a bland, generic answer. Think of large language models not as all-knowing oracles, but as eager chefs waiting for a recipe. The instructions you give them—known as a prompt—determine the quality of the meal.

Without enough background information, this fast intern has to guess what you want. This is where the “context window” comes in—it is the AI’s short-term memory during your conversation. By filling that space with rich details about your goals, you stop treating the AI like a basic search engine and start treating it as a true collaborator.

Because this communication takes practice, teaching students AI prompt engineering is becoming so vital. The best results always come from combining three essential ingredients:

- The Persona: Who should the AI act like? (e.g., “Act as a 5th-grade teacher.”)

- The Task: What do you need? (e.g., “Explain photosynthesis.”)

- The Constraint: What are the boundaries? (e.g., “Use under fifty words.”)

Armed with this specific recipe, the technology transforms from an unpredictable novelty into a highly customized assistant. Once you understand how to guide these tools effectively, you can begin to see their true potential.

One Lesson, Thirty Ways: How Teachers Use AI for Personalized Learning Paths

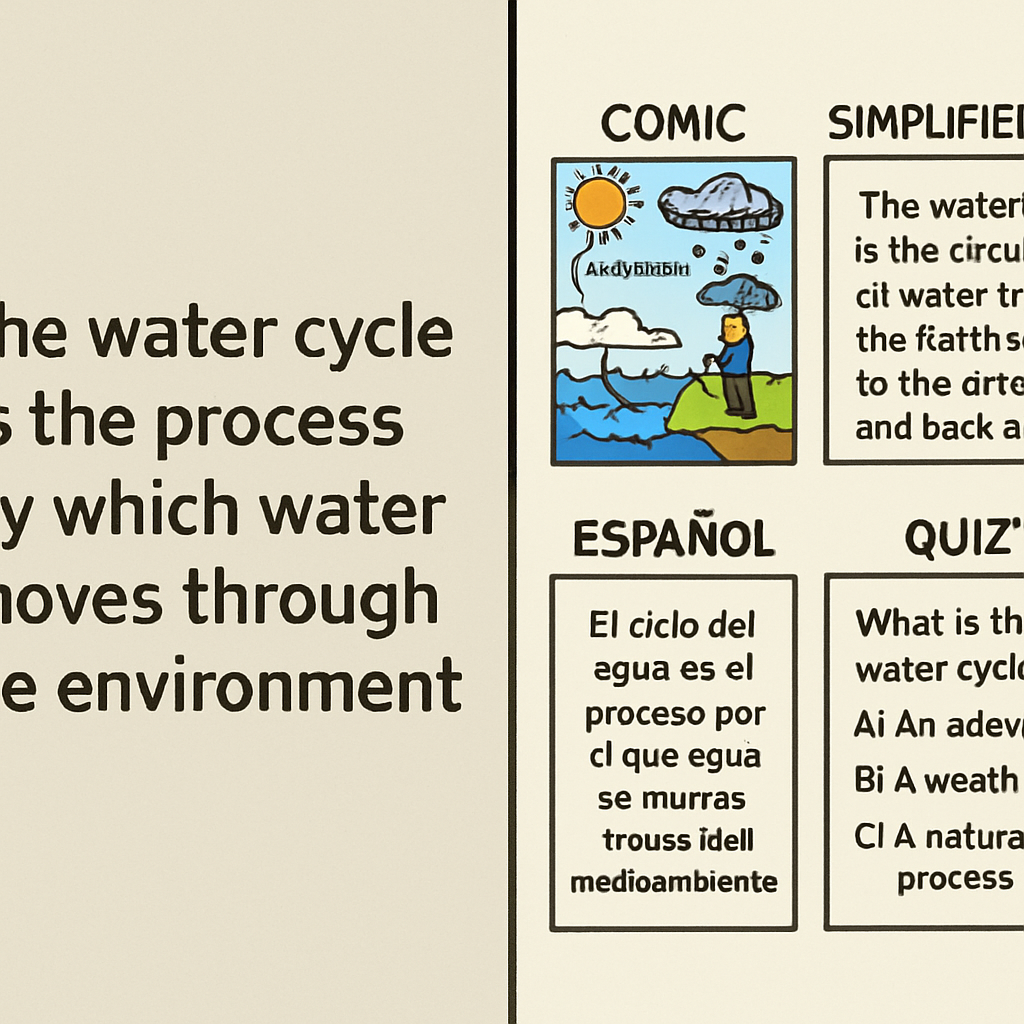

Imagine a middle school science class where thirty students learn at thirty completely different speeds. Educators call adapting to these unique needs “differentiation,” but manually creating custom materials for every single child is virtually impossible for one person.

That exhausting hurdle is exactly where artificial intelligence steps in as a practical assistant. By using automated lesson planning tools for teachers, educators can take a core concept and instantly generate multiple versions to fit whoever is sitting at the desk.

Building personalized learning paths with large language models takes only seconds. A teacher can feed standard text into the AI and ask it to:

- Lower the reading level for a struggling student.

- Translate the worksheet into Spanish for an ESL learner.

- Add soccer examples to capture an unengaged learner’s interest.

Suddenly, the crushing burden of after-hours busywork disappears. Instead of spending endless nights rewriting the same worksheet four different ways, educators can focus their energy on actually connecting with their students.

While customizing education is thrilling, it naturally raises questions about how students are using these tools at home. If the technology can write this well for teachers, we must address the elephant in the classroom.

Moving Beyond the ‘Cheating’ Worry: 3 Ways to Ensure Academic Honesty

When students possess a tool that instantly writes essays, cheating becomes a primary fear. Schools initially relied on detection software to catch computer-generated papers, but these tools are notoriously unreliable—often falsely accusing honest students. Because policing every shortcut is impossible, educators are discovering that learning how to prevent academic dishonesty with AI requires changing the assignment itself.

Shifting focus from a polished final product to the messy writing process solves this problem. Instead of grading one final paper, teachers evaluate the brainstorming and outlining phases. This method pairs perfectly with the benefits of AI-assisted grading feedback, giving teachers more time to guide a student’s early ideas rather than just correcting their grammar at the end.

To maintain integrity, classrooms are adopting three specific “AI-Proof” assignment types:

- The Oral Exam: Students verbally defend their written arguments.

- The In-Class First Draft: Returning to pen and paper for initial thoughts.

- The AI Critique: Students generate an AI essay, then write a human critique exposing its weaknesses.

Embracing these methods transforms the machine from a cheating device into a learning tool. Students are forced to think critically about the generated text rather than blindly trusting it. Developing this skepticism is crucial, especially since these systems frequently invent fake facts out of thin air.

Trust but Verify: Why LLMs Hallucinate and How to Fact-Check with Students

Because AI systems are prediction engines, they sometimes act like confident storytellers who forgot the ending but refuse to stop talking. When an AI invents a fake historical date out of thin air, we call it a “hallucination.” Addressing AI hallucinations in student research provides a vital opportunity to teach children that a professional-sounding answer isn’t always true.

Hidden prejudices within the technology also require our attention. Think of AI as a giant mirror reflecting the internet; if its training data contains stereotypes, the machine repeats them. Mitigating algorithmic bias in educational software means showing students how to question whose perspective might be missing from an AI-generated history summary.

To build healthy skepticism around these blind spots, educators use the ‘SIFT’ method for AI fact-checking:

- Stop: Pause before accepting the information.

- Investigate the source: Consider if an AI is the right tool for this specific fact.

- Find better coverage: Cross-reference the machine’s claims with trusted textbooks.

- Trace back: Locate the original context of any provided quotes.

Turning these mistakes into information literacy lessons ensures students control the technology. Once they master verifying outputs, they can learn to command these systems effectively.

The Classroom to Career Bridge: Why AI Prompting is the New 21st-Century Skill

Transitioning from fact-checking to future careers requires a mindset shift. While some worry that leaning on technology makes students lazy, early AI literacy is actually essential workforce preparation for an AI-driven economy. Think of giving instructions to an AI like delegating tasks to a very eager, very fast intern. The intern can draft a research outline in seconds, but only if the human manager knows exactly what to ask for and how to guide the results.

This cooperative dynamic illustrates the “human-in-the-loop” concept. Rather than replacing people, the technology works alongside them to brainstorm and solve complex problems faster. By thoughtfully integrating large language models into curricula, teachers provide a safe sandbox for students to practice this modern partnership. The goal isn’t letting the computer do the thinking, but using it to skip past the blank page so real creative work can begin.

Ultimately, a machine’s ability to predict words only increases the value of uniquely human traits. Empathy, critical thinking, and teamwork remain the ultimate competitive advantages for our children. Once we understand that these foundational soft skills are what truly steer the technology, we can confidently implement responsible AI policies.

Your 3-Step Action Plan for a Responsible AI Classroom

Instead of fearing this technology as a cheating machine, you can now guide students to use it as a collaborative thought partner. Anxiety transforms into empowerment when you establish responsible AI implementation policies in your home or classroom.

Many wonder: are AI tools safe for elementary students? Safety depends entirely on adult guidance. Younger learners thrive when exploring age-appropriate, closed platforms alongside a trusted adult, treating the technology like a supervised tutor rather than an unsupervised playground.

To create your safe start plan, follow these 3 Steps for Parents/Teachers:

- Experiment together: Co-pilot the AI to write a silly story before ever tackling homework.

- Set No-Go zones: Clearly define when AI is a helpful brainstorming buddy versus an off-limits shortcut.

- Focus on Why not What: Ask students to explain why the AI’s answer works, rather than just accepting what it generated.

Each time you explore these tools together, you build mutual confidence. As we embrace this new way of working, we must ask: if a calculator didn’t stop us from learning math, why would AI stop us from learning how to think critically and creatively?