Remember the first time you used a calculator in math class? There was a widespread panic that students would completely forget how to add or subtract. Today, the rapid rise of ChatGPT is creating that exact same “calculator moment” for nearly every subject in school and task at the office. Instead of replacing human capability, this shift represents a new baseline for artificial intelligence and education and skills.

Unlike a traditional search engine that just fetches existing links, Generative AI actively creates new solutions by predicting the next most likely word in a sentence. According to recent pilot programs utilizing ai in education, treating these tools as all-knowing experts is a mistake. By viewing the software as a 24/7 assistant, you can let it handle repetitive drudgery while you focus on high-level thinking.

Mastering human-AI collaboration requires shifting your daily workflow from creating everything from scratch to becoming a sharp editor. Using the “Bridge Method,” you tackle a familiar task by prompting the AI for a rough draft, then spend your energy refining and verifying those ideas. This practical transition turns an overwhelming technological leap into a manageable, everyday career upgrade.

Moving Beyond the Five-Paragraph Essay: Why Critical Thinking Trumps Memorization

For decades, success meant memorizing facts to write standard reports. Today, that model is flipping as we shift from producing raw text to acting as information curators. True critical thinking in the age of large language models means knowing how to direct a smart tool rather than just acting like one.

The reality is that chatbots act like well-read interns who occasionally lie with total confidence. Experts call this an “AI hallucination,” which is simply the software making a highly confident but completely wrong guess. Recognizing these mistakes highlights the growing contrast of personalized learning vs standardized testing, where evaluating answers now matters far more than memorizing them.

Guarding against these convincing errors requires adopting a prompt-to-verification cycle for every task. You first provide clear directions, the system generates a rough draft, and then you independently fact-check its claims against trusted sources. Treating the computer’s output as a messy starting point rather than a finished product keeps your work accurate and reliable.

Once we stop treating these tools as perfect answer keys, their true educational value unlocks. Teachers can instead use them to build AI-driven personalized learning paths that adapt dynamically to how each student naturally learns. This critical shift in perspective opens the door to creating a revolutionary, round-the-clock support system for every learner.

The 24/7 Private Tutor: How Adaptive Technology Personalizes Learning for Every Student

The frustration of sitting in a classroom where the teacher moves either too fast to catch up or too slow to keep your attention is a familiar one. That one-size-fits-all approach is fading. Today, software acts as an endlessly patient assistant that adjusts to a student’s unique pace. By creating AI-driven personalized learning paths, these tools act like a 24/7 private tutor, detecting exactly where a learner gets stuck and altering the lesson instantly.

This shift away from rigid standardization highlights the real-world benefits of adaptive learning technology:

- Targeted gap detection: The system spots missed foundational concepts immediately.

- Scaffolded instruction: It acts as a guide, breaking complex problems into smaller, manageable steps rather than just giving the answer.

- Instant feedback: Students can practice and fail safely without fear of judgment.

When looking at AI tutors vs human educators, the goal isn’t replacement, but elevation. Because software handles the repetitive drilling, teachers are freed to step away from the whiteboard and become high-impact mentors. They can spend their time coaching students through creative challenges rather than grading basic worksheets. To thrive alongside these tools, learners must know how to interact with them effectively, which makes learning to ask the right questions the next major milestone.

Prompting the Future: Why ‘Asking the Right Question’ is the Most Valuable Skill of 2024

When wondering what are the most essential AI skills for students, typing code has taken a backseat to giving clear directions. Think of a prompt like handing a recipe to a chef. If you just say “make dinner,” the result is completely unpredictable. Specifying your exact ingredients and flavor preferences yields exactly what you want. This practice, known as prompt engineering, is simply the art of asking better questions.

Mastering this requires the “Context-Task-Format” framework. Instead of a vague request, you provide a role (context), state the objective (task), and explain how you want the answer delivered (format). When exploring prompt engineering for educators, this looks like typing: “Act as an eighth-grade science teacher, explain photosynthesis, and write it as a short story.”

Even with a great recipe, the first draft might need adjustments. This is where iterative prompting comes in, turning a one-off command into a collaborative conversation. If the story feels too complicated, you just reply, “make it simpler,” refining the output together. This back-and-forth process is the secret to developing AI literacy for professionals.

Ultimately, human clarity is now vastly more valuable than technical programming knowledge. As these intuitive communication skills transfer from daily homework into the corporate office, the definition of a qualified worker is rapidly evolving.

The Reskilled Workforce: Navigating Career Shifts in an AI-Driven Economy

In five years, your job title might stay the same, but how you spend your Tuesday morning will be unrecognizable. When people ask how will AI change the future job market, the fear is usually about total job replacement. However, the reality is augmentation—using a tool to work faster. Instead of an entire role disappearing, individual tasks are simply automated. Think of human-AI collaboration in the modern workplace like having a tireless intern: you can use it to draft routine emails, summarize long meeting notes, and brainstorm weekly schedules.

Because software now handles this repetitive heavy lifting, the definition of a valuable employee is shifting rapidly. Successfully reskilling workforce for an AI-driven economy means leaning into abilities a computer cannot fake. The most sought-after, AI-resistant soft skills include:

- Empathy: Reading a client’s unspoken frustration.

- Negotiation: Finding middle ground between stubborn teams.

- Ethical judgment: Deciding if an automated action is actually fair.

- Creative problem-solving: Connecting seemingly unrelated ideas to fix a broken process.

- Adaptability: Pivoting your strategy when unexpected challenges arise.

Ultimately, transitioning from a “doer” of basic tasks to a “manager” of AI outputs requires strict human oversight. As we rely more heavily on these powerful new tools, applying our ethical judgment becomes the primary defense for spotting baked-in system biases and protecting personal privacy.

Ethical Guardrails: How to Spot Bias and Protect Privacy in Educational Software

While a software glitch deleting homework was once a student’s biggest fear, today’s classroom stakes are much higher. Because these systems learn by analyzing massive amounts of past human data, they often absorb historical prejudices alongside facts. This creates confident but skewed outputs, meaning a tool meant to help students might inadvertently penalize them based on flawed assumptions.

Spotting this embedded prejudice requires a sharp, critical eye. For instance, automated grading might routinely score non-native speakers lower simply because their phrasing differs from the system’s baseline data. Actively mitigating algorithmic bias in educational software means consistently questioning results that feel suspiciously rigid or disproportionately favor a specific demographic.

Beyond unfair grading, feeding student information into these systems creates severe security risks. Driving ethical AI implementation in higher education and local schools demands two essential privacy rules: never enter personally identifiable details into a public prompt, and always verify the software vendor strictly refuses to use student inputs for future model training.

Technology simply cannot substitute for a teacher’s deep intuition. Human oversight acts as the vital final stopgap, ensuring a professional always reviews automated decisions before they impact a student’s record. Mastering this protective balance is the foundation of true AI literacy.

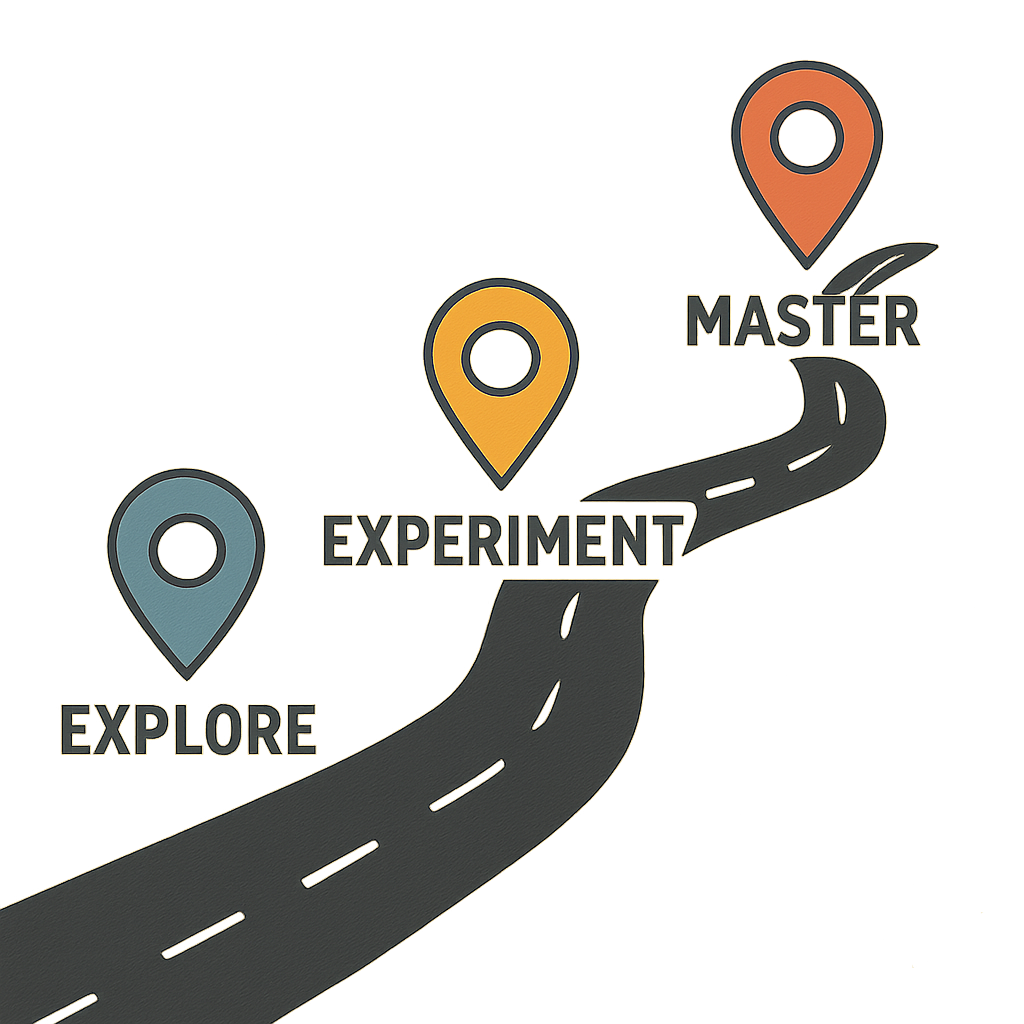

Your AI Literacy Roadmap: Three Steps to Future-Proof Your Skills Today

You no longer need to view artificial intelligence in education or the workplace as an intimidating tidal wave. You now have the mindset of an AI director. The secret to developing AI literacy for professionals isn’t learning computer code—it is simply learning to guide the tool confidently.

To move from a passive observer to an active, confident user, establish a personal 30-day AI learning plan using this checklist:

- Week 1 (Play): Let AI draft one routine email to see immediate results.

- Week 2 (Prompt): Practice giving specific instructions to improve its answers.

- Week 3 (Verify): Fact-check an output, treating it like a confident intern’s rough draft.

- Week 4 (Integrate): Make this tool a regular part of your weekly workflow.

Each time you practice, you gain the confidence to lead AI conversations at school or work. The future belongs to those who view this technology not as a replacement for human thought, but as the ultimate multiplier of human potential.