You don’t need a PhD in advanced mathematics to build your first artificial intelligence. Millions of people interact with popular chat tools and smart assistants every day, yet making the jump from simply using these products to actually building them feels like stepping into a highly technical, mysterious black box. In reality, creating an intelligent program is much closer to cooking a new dish than it is to solving complex calculus equations. Anyone who can follow a basic recipe and organize a simple spreadsheet already possesses the fundamental logic required to succeed in this field.

Peeking behind the curtain reveals a highly manageable system of ingredients and steps. Think of a computer as a high-powered oven waiting for directions. To bake something useful, you need high-quality ingredients and a clear instruction manual. In the technology world, your ingredients are the raw information you feed the system, and your manual is an algorithm—a specific set of step-by-step instructions. Instead of manually typing out every possible answer to a problem, you use data-driven logic to help the machine discover the right answers on its own.

Teaching this software how to behave is a process known as model training, and it looks a lot like tutoring a student. According to data engineers at major streaming platforms like Netflix, their movie recommendation systems do not work because a programmer typed out exactly what each user wants to watch. Rather, the software acts like an observant student who reviews millions of past viewing habits. You give this student thousands of practice tests in the form of raw data, and eventually, they learn the underlying patterns well enough to accurately guess your next favorite show.

Asking yourself what you should learn to create an AI system is the first step toward mastering a highly valuable skill. By breaking the software development process down into simple, accessible pieces, anyone can discover how to learn machine learning from scratch. The following guide serves as an approachable AI engineer roadmap for self-learners, showing you the exact tools and concepts needed to turn that intimidating black box into your very first working prototype.

Why Python is the Universal Translator for AI Development

Since you already know that teaching a machine requires writing code, the immediate question is which language to learn first. For modern Python programming for artificial intelligence, the answer is incredibly encouraging because Python is designed to prioritize human readability. Instead of forcing you to memorize complex computer jargon, it reads almost like plain English. This simplicity means you spend less time fighting with confusing grammar rules and more time focusing on what you actually want your program to do.

What makes this language truly powerful, however, isn’t just how it looks—it’s the massive community sharing pre-built toolkits called libraries. Imagine trying to build a house; instead of chopping down trees and forging your own nails, you simply go to a hardware store. In AI system development, libraries are those hardware stores. They provide ready-to-use shortcuts created by experts, allowing you to easily add advanced features to your project without needing to write thousands of lines of code from scratch.

To get started, you only need to familiarize yourself with the best data science libraries for beginners, which act as the foundational tools in your new toolkit:

- NumPy: Think of this as your digital calculator, built specifically to handle large groups of numbers quickly.

- Pandas: Your digital filing cabinet, perfect for organizing and reading data that looks like a basic spreadsheet.

- Scikit-Learn: The pre-packaged “student” model, providing ready-made formulas to build simple AI.

Once you have these tools in hand, the next step is understanding how to actually use them to make sense of your information.

The ‘Hidden’ Math: Using Logic and Statistics to Find Patterns

Many beginners worry that artificial intelligence requires complicated equations, but the fundamentals of mathematics for machine learning are actually highly approachable. The secret is distinguishing between the math you must understand and the math the computer calculates for you. Instead of crunching numbers by hand, you use linear algebra to organize your information. You can think of matrix operations not as abstract math, but simply as a way to line up massive spreadsheets so the computer can read everything instantly.

Once your information is organized, the system learns through a continuous adjustment process called gradient descent. Imagine standing blindfolded on a hill, trying to find the bottom of the valley just by feeling the downward slope beneath your feet. When evaluating linear algebra and calculus for AI, linear algebra builds this entire landscape of data, while calculus is the hidden tool the algorithm uses to safely step downward toward the right answer.

Every step the algorithm takes relies heavily on probability to measure its own accuracy. This is where essential statistics for data analysis help the system establish statistical confidence in its decisions. An AI rarely guarantees a perfect “yes” or “no” answer. Instead, it might decide it is 92% sure a photo contains a cat, and that specific confidence threshold determines the final result you see on your screen.

Grasping these logical patterns frees you from needing an advanced degree to build working prototypes. Your real job is guiding the software and providing the right study material for it to process. Because these underlying mathematical operations completely depend on what you feed into them, your success ultimately relies on data as fuel.

Data as Fuel: Why High-Quality Information is Better than Complex Algorithms

Imagine pouring contaminated gasoline into a high-performance sports car; no matter how advanced the engine is, the vehicle will sputter and stall. The exact same principle applies to AI system development, where the quality of your information always outweighs the complexity of your algorithm. If you want to build an AI that actually works, you must adopt a “data-first” mindset. This means ensuring your information is not only accurate but also highly diverse. For example, if you only feed an AI pictures of golden retrievers, it will fail to recognize a poodle, creating a narrow-minded system that breaks in the real world.

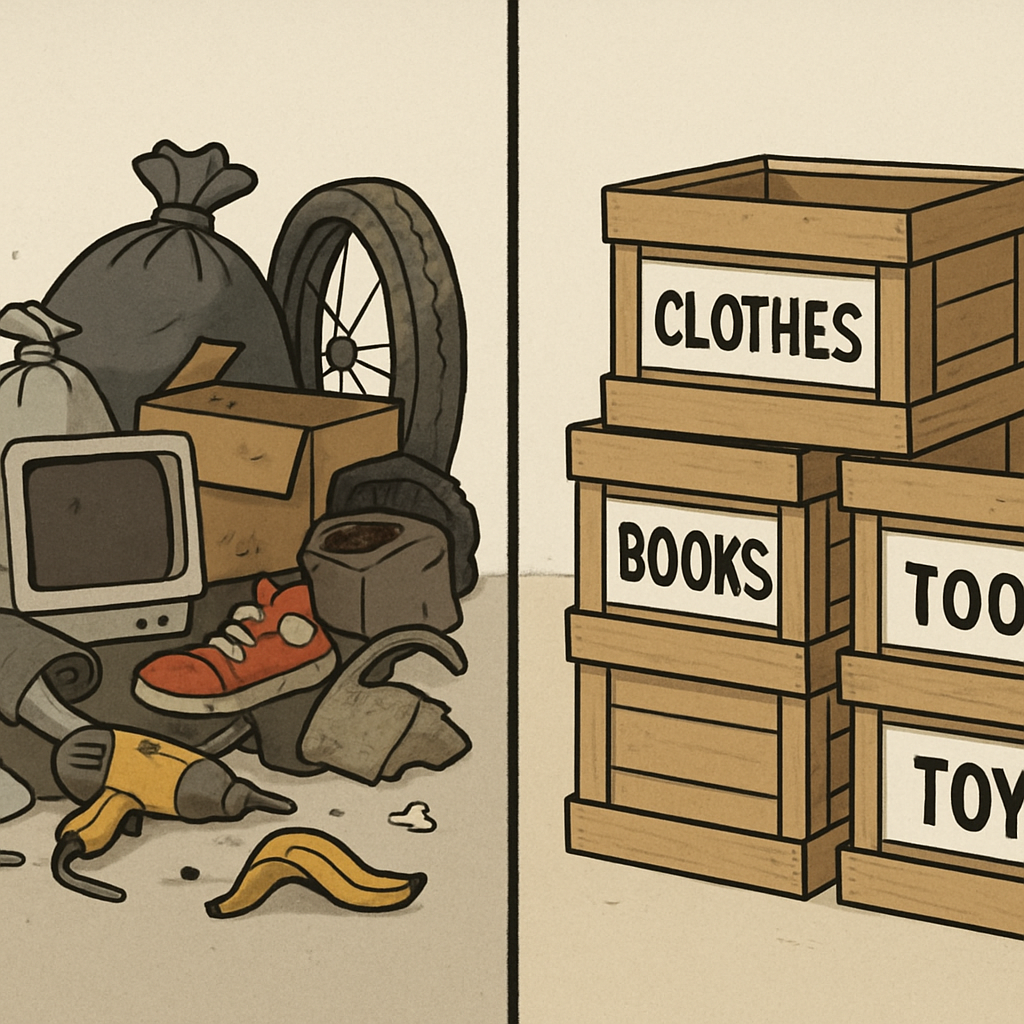

Before handing this information over to your computer, you must clean it up—a step known as data preprocessing. Knowing how to preprocess data for model training prevents the system from getting confused by messy spreadsheets. To prepare your dataset, follow this essential four-step checklist:

- Remove duplicates: Strip out repeated entries so the AI doesn’t overvalue a single piece of information.

- Handle missing values: Fill in blank cells or delete incomplete rows to prevent calculations from crashing.

- Scale numbers (Feature scaling): Adjust different measurements (like age and income) onto a similar scale so the system doesn’t assume larger numbers are automatically more important.

- Label your data: Tag your files with the correct answers (e.g., labeling an image “dog”) so the AI knows exactly what success looks like.

Fortunately, you do not have to perform these tedious cleaning tasks manually. Popular data science libraries act as digital assistants, allowing you to organize millions of rows with just a few simple coding commands. Once your fuel is purified and labeled, your engine is ready to run.

Teaching the Machine: Choosing Between Supervised and Unsupervised Learning

Remember those labeled crates of data we just organized? They unlock the most common approach in machine learning basics: supervised learning. Imagine teaching a student using flashcards. You show a picture, wait for their guess, then flip the card to reveal the correct answer. Computers learn similarly using a training set—a batch of examples where the right answers, or labels, are already provided. For classification tasks, like sorting daily emails into “spam” or “inbox,” this guided feedback loop teaches the machine exactly how to accurately predict outcomes.

Sometimes, however, you cannot practically tag millions of files with answers. This dilemma highlights a crucial choice in AI project management when weighing supervised vs unsupervised learning algorithms. Unsupervised learning removes the teacher entirely, asking the computer to find hidden connections in raw, unlabeled data. Instead of classifying known categories, it performs clustering. Think of dumping a box of mixed Legos on the floor without instructions; the machine naturally groups the pieces by color or shape alone to make sense of the mess. Streaming apps use this unguided method to intuitively cluster similar viewers together for movie recommendations.

Selecting the right style depends entirely on whether you must predict a specific, known target or discover hidden patterns. Once you determine how the software will study its fuel, you need a digital structure to process those lessons.

Building Your First Brain: Neural Network Architecture Explained Simply

Moving beyond basic sorting requires a system inspired by the human brain itself, forming the core of neural network architecture basics. Instead of a single formula making a guess, imagine a massive committee of tiny decision-makers called neurons. Each neuron looks at a small piece of your data—like a single pixel of a photograph—and passes its initial opinion down the line to the next group.

That middle space where all the investigating happens is made up of “hidden layers.” Think of these layers as a line of detectives working together. The first detective might only notice straight lines, the second looks for shapes like triangles, and the final detective combines those clues to confidently declare, “Those are pointy ears; this is a cat!” Information flows sequentially from the raw input, through these hidden investigators, straight to the final prediction.

People often use intimidating buzzwords when talking about this process, but the term “deep learning” simply refers to a neural network that has many of these hidden layers stacked together. Because a deep network might contain millions of tiny detective neurons, standard computer processors quickly get overwhelmed. To run these complex models efficiently, developers rely on Graphics Processing Units (GPUs)—specialized hardware originally designed for video games that happens to be perfect for processing thousands of tiny calculations at once.

Fortunately, you do not need to wire millions of digital neurons together by hand. Modern software builders use deep learning frameworks, which are pre-packaged toolkits that handle the complicated mathematical wiring for you.

The Power Tools: Navigating TensorFlow vs. PyTorch for Projects

Now that you understand how digital detectives work together, how do you actually build them? Instead of writing complex math from scratch, programmers use deep learning frameworks to handle the heavy lifting of AI system development. The two heavyweights in this space are TensorFlow and PyTorch. Think of them like Apple iOS and Android: both help you build powerful tools, but they appeal to different builders with different needs.

Choosing between TensorFlow and PyTorch for deep learning projects depends entirely on your ultimate goal. Both tools rely on “computation graphs”—essentially invisible flowcharts that track exactly how your data moves through the network. However, they handle these flowcharts differently, which creates distinct advantages for each toolkit:

- PyTorch: Best for learning and experimenting. It builds these flowcharts on the fly and feels almost exactly like standard Python, making it highly forgiving for beginners testing new ideas.

- TensorFlow: Best for massive industry applications. Backed by Google, it excels at “deployment readiness,” boasting a massive software ecosystem designed to seamlessly package your AI for web servers and smartphones.

Fortunately, picking one toolkit over the other does not lock you in forever. Because both platforms share the exact same foundational logic, mastering PyTorch makes learning TensorFlow significantly easier down the road. Once your digital brain is successfully built and trained using either option, the final hurdle is figuring out how to let the public actually use it.

From Code to Reality: Managing the MLOps Lifecycle and Cloud Deployment

Building a smart AI on your laptop is an exciting milestone, but it doesn’t help anyone if it stays hidden. To create real-world impact, you must move your project onto the internet—a process known as deployment. Think of deployment as opening a public storefront for the digital brain you just trained. Rather than buying expensive physical servers to host this shop, developers rent digital space on cloud platforms for deploying AI systems. These platforms act as invisible supercomputers, ensuring your application runs smoothly whether ten or ten thousand people visit.

That initial launch, however, is just the beginning of a continuous maintenance routine driven by the MLOps lifecycle and model deployment. MLOps—short for Machine Learning Operations—is the industry-standard approach to AI project management. Once your system goes live, it constantly encounters unpredictable human behavior. Over time, algorithms often experience “model drift,” meaning their accuracy drops because the real world has changed since their original training. Imagine teaching an AI to recommend popular cell phones in 2010; without regular updates, that system would be completely lost trying to suggest modern smartphones today.

To survive these shifting trends, creators must constantly monitor their applications and feed them fresh data. Yet, maintaining a highly accurate program is only half of the equation when releasing technology into the wild. As your application starts making live decisions that impact everyday users, you must also guarantee those automated choices are safe and objective.

The Ethical Blueprint: Preventing Bias and Ensuring Fairness in Your Systems

Remembering that data is the fuel powering your engine, what happens if you accidentally pump in contaminated gas? The system breaks down. In machine learning, this contamination takes the form of algorithmic bias—when a program produces unfair outcomes because of skewed data. As you figure out how to create an AI system, recognizing these ethical pitfalls is just as crucial as writing code. A true “Responsible AI” mindset begins the moment you start gathering information.

Flawed data usually sneaks into projects through predictable traps. To protect your application, watch out for these common sources of ethics and bias in AI development:

- Sampling bias: Like surveying only dog owners about the best pets, this happens when your training data doesn’t properly represent everyone.

- Historical bias: If past human decisions were prejudiced, the AI will blindly copy and automate those bad habits.

- Algorithmic exclusion: This occurs when a system accidentally locks out certain groups, like a voice assistant failing to understand regional accents.

Catching these errors requires making fairness testing a standard part of your AI project management routine. You don’t need complex math to start; simply ask, “Does this system perform equally well for people of different backgrounds?” By deliberately testing your prototype with diverse inputs, you ensure your digital creation helps rather than harms.

Your 30-Day AI Roadmap: Where to Write Your First Line of Code Tomorrow

You no longer have to view artificial intelligence as a mysterious black box reserved for math geniuses. By demystifying what goes into creating an AI system, you have already shifted from being a passive consumer of technology to an aspiring builder. The biggest hurdle right now is not a lack of resources, but rather “paralysis by analysis.” To beat that overwhelm, you need to turn this new perspective into immediate, focused action.

Consider this your starter AI engineer roadmap for self-learners—a straightforward 30-day sprint designed to build your momentum:

- Week 1: Python Basics. Focus entirely on learning the “translator.” Practice writing simple variables, loops, and basic functions.

- Week 2: Math and Stats Basics. Don’t memorize complex formulas. Instead, learn the logic of probability and how algorithms find patterns.

- Week 3: Data Cleaning. Practice gathering and organizing your “fuel.” Learn to handle messy spreadsheets so a computer can easily read them.

- Week 4: Your First Model. Use a beginner-friendly library to train a basic program to recognize a simple pattern.

Your ultimate goal should be completing a “toy” project as quickly as possible. Don’t worry about creating the next ChatGPT just yet. Start with a simple action to see immediate results, like building a basic movie recommender or a program that sorts spam emails. This early victory bridges the gap between reading about theories and actually watching your computer learn from the data you provided.

You now understand the building blocks of AI well enough to take that first real step. Every time you write a line of Python or organize a row of data, you build confidence and reinforce the exact same foundation used by professionals worldwide. Open a beginner coding tutorial today, build your first digital student, and start applying these concepts to solve real problems.