Picture a high school student handing in a flawless, beautifully structured 1,000-word paper on the American Civil War. Yet, when asked a basic question about their thesis in person, they stare blankly and cannot explain a single point. With the rapid evolution of technology that can generate text in seconds, the traditional take-home essay has become highly vulnerable to an era defined by ChatGPT cheating in essays.

Educators describe this unsettling missing link as the “AI Gap”—the stark difference between a person’s ability to produce a polished final product and their actual understanding of the material. In practice, classroom teachers are finding that a perfect final assignment no longer guarantees any real comprehension. Relying on an algorithm to write a paper is exactly like using a GPS to drive across an unfamiliar city; the technology flawlessly delivers the final destination, but the driver never actually learns how to navigate the roads themselves.

How do we fix an evaluation system where the final output is so easily manufactured? The emergence of this AI Gap is actually a valuable signal that society must fundamentally change how we measure human intelligence. Instead of vainly attempting to ban technology, forward-thinking schools and workplaces are adopting innovative AI-resistant assessments that evaluate a person’s messy, real-time thought process rather than just grading a sanitized document. By shifting the focus to how someone thinks in the moment, we can preserve academic honesty and ensure true learning actually survives the artificial intelligence revolution.

Moving from Measuring Results to Measuring Growth: What is an AI-Resistant Assessment?

To bridge the “AI Gap,” educators are adopting AI-resistant assessments. Rather than judging a polished final product that a chatbot easily generates, these evaluations focus on the human learning process. They measure the effort, the rough drafts, and the personal insights formed along the way.

Moving beyond memorized facts requires testing how individuals handle actual scenarios. This approach, known as authentic assessment, measures applied capabilities a machine cannot fake. While implementing authentic assessment strategies for higher education or corporate training sounds academic, the goal remains incredibly practical. Instead of asking people to define terms, it asks them to solve live customer complaints or defend business decisions.

This shift toward valuing the productive struggle of learning ensures fairness and builds future-proof skills. If a machine can complete an assignment in seconds, that task was merely testing our ability to follow basic instructions. Prioritizing genuine growth over robotic perfection requires rethinking traditional testing entirely.

Why ‘Write an Essay’ is Dead: The Danger of Generic Prompts

We have all seen generic assignments like “explain the causes of World War I.” If a chatbot generates a flawless response in three seconds, that task isn’t testing human intelligence. Broad questions rely on reciting established facts, which machines do perfectly. To maintain academic integrity, we must stop asking people to act like basic search engines.

Knowing how to create AI-proof writing prompts requires adding contextual constraints. This simply means forcing the writer to connect a broad topic to a hyper-specific, personal situation. Consider how broken assignments can easily be fixed:

- Broken: Summarize The Great Gatsby. / Fixed: Compare Gatsby’s parties to our town’s recent summer festival.

- Broken: Write about climate change. / Fixed: Propose a solution for our neighborhood’s specific flooding issue.

- Broken: Explain supply and demand. / Fixed: Interview a local barista about pricing seasonal drinks.

- Broken: Analyze leadership. / Fixed: Relate chapter four to a recent decision you made at work.

Applying this “Personal Context” rule guarantees learners are actively thinking rather than mindlessly copying. An algorithm cannot interview your neighborhood barista or remember a local event. Requiring these real-world connections forces students into practical application. This mindset shifts testing from a memory game to an active demonstration of competence, paving the way for hands-on evaluations that prove actual capability.

The Driving Test Model: Using Authentic Assessments to Prove Capability

Think about getting a driver’s license. Passing the written exam shows you memorized the rulebook, but the actual road test proves you can safely merge onto a busy highway. This exact logic is reshaping how schools and workplaces measure intelligence today. We are transitioning from traditional multiple-choice tests to scenario-based challenges where individuals must actively demonstrate their skills.

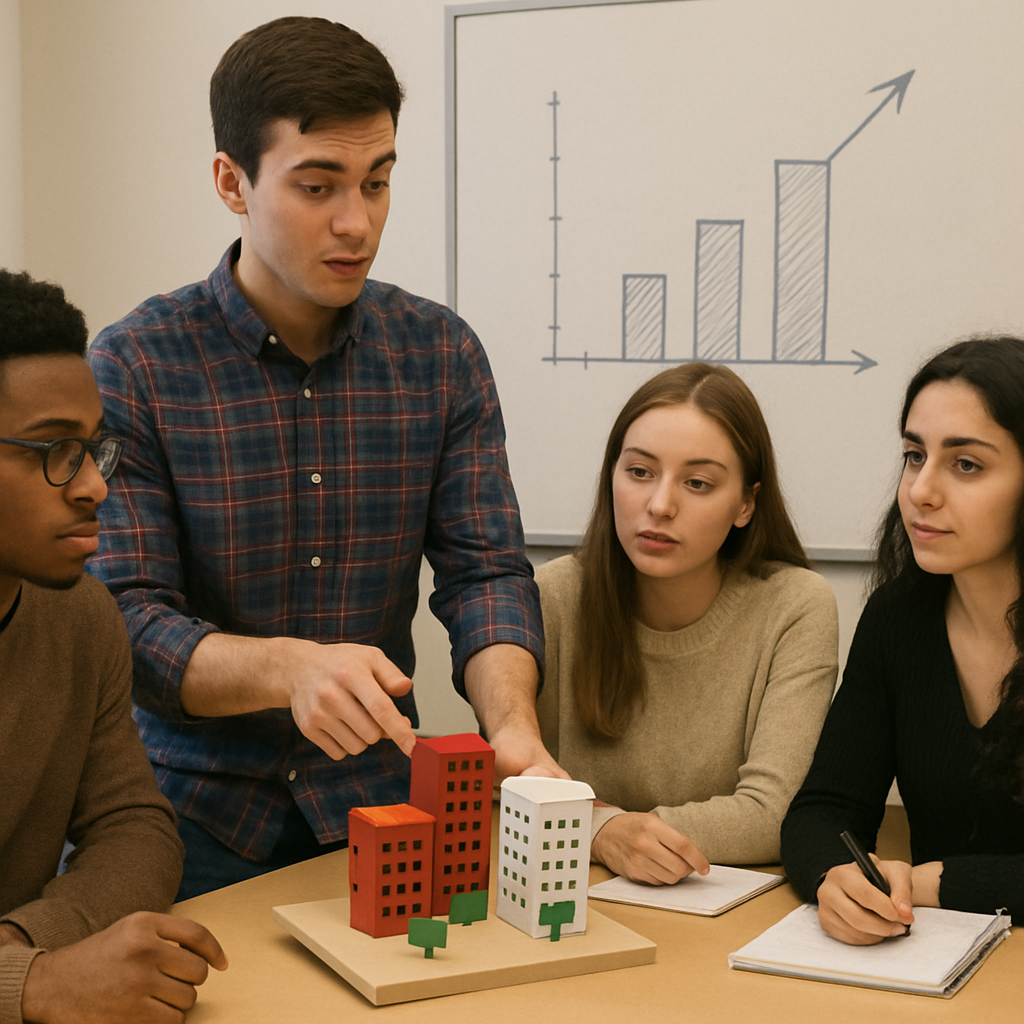

Instead of simply asking someone to summarize a topic, educators now use performance-based evaluation rubrics. These are clear scoring guides that measure how well a person executes a complex task in real time. For instance, rather than writing a business essay, a student might pitch a campaign to a mock client. Artificial intelligence can easily generate a textbook definition of marketing, but it often fails to perform a multi-step simulation that involves adapting to unpredictable human reactions.

Creating these “situated” tasks means placing the learner into a specific role to solve a tangible problem. These real-world application assessments require people to react and make decisions just like they would in their daily lives. When an assignment forces you to act as a local mayor handling a community budget crisis, relying on a machine’s generic output is no longer enough to succeed. Shifting focus toward this active application of knowledge naturally eliminates unauthorized shortcuts by revealing a learner’s true capabilities.

Grading the Journey, Not the Destination: Why Process-Oriented Learning Stops Cheating

Imagine inspecting a house’s final coat of paint without ever checking if the foundation is solid. Historically, a completed essay counted for an entire grade, making the temptation to use a shortcut overwhelming. Redesigning coursework for the generative AI era means changing this math. Educators are now shifting up to 50% of a final grade onto a project’s “working stages,” instantly reducing the incentive to cheat by making the final product worth less than the effort to get there.

This method forces learners to leave a visible creative trail. By reviewing the messy steps of learning, instructors actually encourage students to show their mistakes as evidence of original thought. The major benefits of process-oriented grading become clear when verifying integrity through a three-step routine:

- Outline: A rough map of early, unpolished ideas.

- Annotated Bibliography: Source lists with personal notes explaining why each piece of research matters.

- Draft with Tracked Changes: A working document revealing exactly where sentences were deleted, revised, and polished.

Evaluating this journey guarantees the learner did the mental heavy lifting, because a machine cannot convincingly fake weeks of gradual human realization. Still, even with a documented trail of notes, face-to-face proof of understanding remains invaluable.

The 5-Minute Conversation: Why Oral Exams are Making a Modern Comeback

The fastest way to verify if someone truly grasps a topic is simply to talk to them. This human interaction forms the core of the viva voce, a term meaning “by live voice.” While traditionally reserved for high-stakes university defenses, educators are now adapting these verbal check-ins for everyday classrooms. These modern five-minute conversations offer a fast, completely AI-resistant way to prove that a student actually wrote their submitted work.

Shifting from pure memorization to explanation completely changes how we measure intelligence. When comparing oral exams vs traditional multiple choice tests, paper quizzes only show that a person can recognize a fact, whereas a live chat proves they understand the underlying logic. Teachers keep these conversations low-stress by focusing on the student’s personal journey. Using these viva voce examination techniques, an instructor might ask, “Why did you choose this specific source?” rather than grilling them on exact dates. If a machine generated the essay, the learner will stumble; if they did the actual reading, they will naturally defend their choices.

Defending ideas out loud does more than just catch shortcuts; it builds vital real-world confidence. Articulating a thought process under gentle pressure is a uniquely human capability. Yet, scheduling live one-on-one meetings for every assignment is not always practical, requiring creative formats that capture this authenticity asynchronously.

Beyond the Written Word: Using Videos and Multimodal Design

For decades, the standard five-paragraph essay was the ultimate proof of learning. Today, relying entirely on typed text is risky because artificial intelligence is built primarily to generate words. If an assignment only requires pressing “print” on a document, it becomes incredibly easy to outsource the thinking to a machine. To capture true understanding, educators are diversifying how knowledge is expressed beyond the basic written word.

Embracing this shift involves multimodal assignment design, which simply means combining different formats like audio, visuals, and physical movement. AI cannot convincingly fake a human face or a genuine, stumbling human voice. To leverage those undeniable signatures of authenticity, teachers are replacing traditional papers with:

- Podcast interview

- Video tutorial

- Physical diorama

- Interactive map

- Hand-drawn infographic

Building physical artifacts or recording live audio requires the exact human elements that algorithms lack. By developing these personalized learning tasks for students, schools transform standard memory tests into vibrant, authentic showcases of capability. While seeing a face or hearing a voice ensures a human is doing the actual work, the ultimate safeguard requires tying those creative projects directly to local, lived experiences.

Making it Personal: Why Real-World and Local Tasks are AI’s Weakest Point

Even the most advanced artificial intelligence lives entirely inside a server, lacking personal memories or a physical zip code. If an assignment asks for a basic historical summary, a machine generates it instantly. However, if a teacher requires students to analyze a current event that happened less than twenty-four hours ago, the technology stumbles. Algorithms rely on older training data, meaning they cannot organically process breaking local news or react to sudden neighborhood developments.

Moving education outside the classroom walls creates an environment where faking the work becomes virtually impossible. Instead of assigning generic essays, educators are using project-based learning to reduce plagiarism by asking learners to interview a local community member. A computer program cannot walk down Main Street, read the body language of a business owner, or ask empathetic follow-up questions. By forcing assignments into the physical world, the evaluation measures human connection rather than mere data retrieval.

Connecting these hyper-local tasks to someone’s family history or future career goals makes the effort deeply relevant. When educators design localized learning tasks—like comparing an economic theory to their own grandparent’s career journey—they remove the desire to take digital shortcuts. Linking academic concepts to an individual’s lived reality naturally sparks genuine engagement. These hands-on community experiences perfectly set the stage for deep reflection.

Fostering Critical Thinking Through Reflective Writing

Imagine a learner uses a modern text generator to draft a historical summary. What the software cannot fabricate is the genuine frustration of hitting a research dead-end or the sudden moment of clarity when grasping a complex idea. By fostering critical thinking through reflective writing, educators shift the spotlight from a polished final product to the uniquely human process of learning itself.

Evaluating this mental journey is quickly becoming as important as scoring the final exam. A machine might instantly output a flawless paragraph, but it cannot authentically describe the struggle to choose the right words. When students share honest narratives about their personal mistakes and subsequent improvements, teachers easily spot the genuine human spark. This method proves incredibly powerful for promoting academic integrity because it positions artificial intelligence as a visible brainstorming assistant rather than an invisible replacement.

Demanding that individuals examine how they reached their conclusions fundamentally changes the goal of education. We no longer just care that someone found the destination; we want to see the compass they used to navigate the woods. Valuing actual human growth over instantly generated perfection provides long-term benefits that outlast any single course.

From Information Recalling to Critical Thinking: The Long-Term Benefit of AI-Proof Learning

True learning goes far beyond repeating facts. Upgrading evaluation methods isn’t just a defensive tactic to catch cheaters; it is a vital step toward future-proofing students for the modern workplace. By designing AI-resistant assessments, we actively shift the value toward how you think over what you can passively memorize.

Start seeing immediate results by applying a simple three-step audit to any current assignment to make it more AI-proof today:

- Add a personal link: Require the inclusion of personal experiences or highly local contexts.

- Add a process step: Ask to see rough drafts, initial sketches, or brainstorming notes.

- Add a verbal check: Follow up with a brief, live conversation where the learner explains their reasoning.

Applying this audit does more than just secure a grade; it is a core strategy for promoting academic integrity in digital learning. These human-centered methods naturally foster deep learning, ensuring we cultivate better, more resilient thinkers who can navigate complex challenges rather than just generate quick answers.

Embrace a future that celebrates the messy, beautiful process of human thought rather than just a polished final product. After all, if an AI can do a task in seconds, was it really measuring human intelligence, or just the ability to follow instructions?